Artificial intelligence is transforming images, voices and video into manipulable data. As synthetic media spreads across the internet, societies are confronting a difficult question. If visual evidence itself can no longer be trusted, what happens to truth in the digital age?

The Moment When Images Stopped Being Proof

For more than a century photographs carried an authority that few other forms of evidence could match. When a camera captured a moment it appeared to freeze reality itself. Courts accepted photographs as documentation of events. Journalists used images to verify reports from distant locations. Historical archives preserved visual records that helped societies remember their past. A photograph was not merely an illustration but a witness. It confirmed that something had happened at a specific time and place. The cultural belief that seeing is believing was not simply a figure of speech. It reflected a deeply rooted assumption that visual media represented an objective window into the world.

The digital revolution expanded that culture of visual documentation to a scale that earlier generations could scarcely imagine. Cameras became smaller and cheaper until they were eventually embedded inside smartphones carried by billions of people. Everyday life began to unfold through lenses. Family celebrations, protests, political speeches and unexpected events were all captured instantly and shared across social networks. Images and videos became the primary language of online communication. The internet gradually transformed into a vast global archive of visual experience in which countless moments were recorded and distributed every minute.

Yet beneath this expansion of digital communication another transformation was quietly taking place. When images and videos became digital files they also became data. Unlike film photographs or magnetic tapes digital media could be copied perfectly, altered with software and transmitted across networks without any physical limitations. At first these capabilities were celebrated as creative tools. Photographers could adjust lighting or color. Filmmakers could enhance visual effects. Graphic designers could manipulate images with a level of precision that earlier generations never possessed. The power to modify digital media appeared to be an extension of artistic possibility.

Artificial intelligence has now taken that transformation much further. Advances in machine learning have produced systems capable not only of editing existing media but of generating entirely new images, voices and videos that appear convincingly real. Neural networks trained on large datasets can learn the patterns that define a human face or a person’s voice. Once those patterns are learned the systems can produce new visual or audio material that imitates those characteristics with remarkable accuracy. In practical terms this means that a video clip can depict a person speaking words they never actually said. A photograph can portray an event that never occurred. A voice recording can reproduce the sound of someone who never participated in the conversation.

This new category of synthetic media is commonly known as deepfake technology. The name originates from early experiments in which deep learning models were used to replace faces in video footage. What began as a niche experiment within artificial intelligence research has evolved into a powerful and widely accessible tool. Software capable of generating realistic faces or cloning voices now exists in open source libraries and consumer applications. Tutorials explaining how to use such systems circulate widely across developer forums and online video platforms. As computing power continues to become cheaper the ability to generate synthetic media is spreading rapidly beyond specialized research environments.

The consequences of this shift are profound because they challenge the assumption that visual documentation can reliably represent reality. A photograph once implied that light had reflected from physical objects onto a camera sensor at a particular moment in time. In the deepfake era that assumption no longer holds. Images and videos can now be synthesized entirely through algorithms without any connection to a real event. A convincing video may be nothing more than the output of a machine learning model trained on thousands of images collected from the internet. The boundary between documentation and fabrication begins to blur.

This transformation raises difficult questions about identity and trust in digital environments. Personal images that individuals share online may become raw material for artificial intelligence systems capable of generating manipulated content. A photograph taken from a social media profile might be incorporated into a synthetic video or used to train a model that replicates facial expressions. Voice recordings from interviews or public appearances could be analyzed by algorithms designed to reproduce speech patterns. Once digital media enters the vast ecosystem of online data it may acquire uses far removed from the intentions of the person who originally created it.

The social implications of these technologies are already visible. Investigations conducted by digital rights organizations have repeatedly found that malicious deep-fake content often targets women. Photographs taken from social media accounts can be manipulated to produce fabricated images or videos that are then circulated across the internet. Victims frequently have little control over how these altered representations spread once they appear online. Even when such material is eventually removed the reputational harm and emotional distress can persist for years. In this sense synthetic media does not merely introduce a technical problem but intensifies existing patterns of harassment and exploitation within digital spaces.

Beyond individual harm the rise of deepfake technology has also begun to erode a broader foundation of trust. Modern societies rely heavily on visual evidence when establishing factual narratives about events. News organizations depend on images and video recordings to verify breaking stories. Law enforcement agencies analyze footage when investigating crimes. Courts frequently consider visual documentation when evaluating testimony. If images and recordings can be generated artificially with increasing realism the credibility of visual evidence itself becomes uncertain. The result is a fragile information environment in which authentic documentation may be dismissed as fabricated while fabricated content may be accepted as authentic.

Researchers sometimes describe this situation as the collapse of visual trust. When the authenticity of images becomes difficult to verify the entire ecosystem of digital communication begins to shift. Public debates may be shaped by videos that appear convincing but lack any connection to reality. Political actors may dismiss genuine recordings as artificial creations. The boundaries that once separated fact from fabrication become increasingly ambiguous. In such an environment the internet faces a challenge far more complex than the existence of a single technology. It must confront the possibility that the very mechanisms through which visual information circulates may need to be reconsidered.

The emergence of synthetic media therefore forces a deeper reflection about the structure of the digital world itself. For decades online platforms were designed with a single dominant goal. They were built to make communication as open and frictionless as possible. Images and videos could be shared instantly across global networks. The faster information moved the more successful the platforms became. Yet the deepfake era reveals that unlimited circulation of digital media also creates vulnerabilities. When images are easily accessible and widely distributed they may also be extracted, manipulated and repurposed in ways that their creators never anticipated. Understanding how this architecture functions is essential for understanding why synthetic media has become such a powerful force within modern information ecosystems. The challenge posed by deepfakes is not only about artificial intelligence itself. It is also about the structure of the platforms that host and distribute digital media across the world.

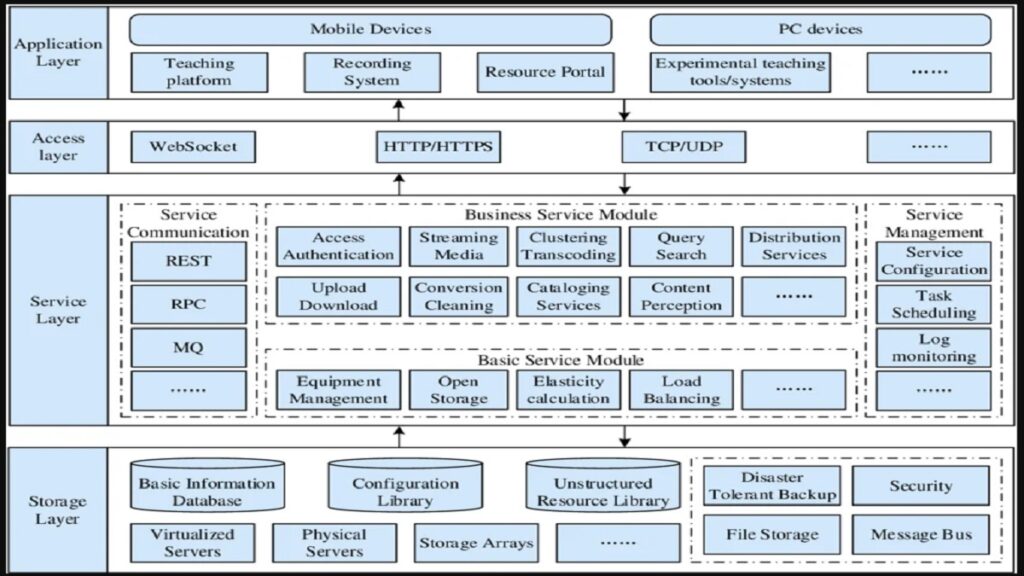

The Architecture of the Sharing Internet

The spread of synthetic media cannot be understood without examining the structure of the digital environment through which images and videos move. When social networking platforms first began to dominate the internet their primary objective was remarkably simple. They were designed to remove friction from communication. A photograph taken on a phone could be uploaded instantly. A video clip could be shared with thousands of people in seconds. A message written in one country could be read across the world almost immediately. The easier it became to post and distribute content the faster these networks expanded. Platforms competed to ensure that nothing slowed the flow of information between users. In the early years this design philosophy appeared almost universally beneficial. Communication was democratized. Anyone with a smartphone could become a publisher. Moments that once remained private could now be witnessed by a global audience.

Behind this culture of effortless sharing lies a technical architecture that treats media as highly accessible digital objects. When a user uploads an image or video the platform typically stores that file on its servers and assigns it a specific network address that allows it to be retrieved whenever required. This system allows images to appear instantly in feeds and enables users to distribute links across multiple websites. While such architecture is efficient it also means that once media enters the digital ecosystem it often becomes easier to copy or extract than most people realize. Files can be downloaded by automated programs. Images can be cached by browsers or mirrored across different networks. Video frames can be separated and processed by other systems. In practical terms the internet has gradually accumulated an enormous reservoir of visual material that exists in countless locations simultaneously.

For many years this accessibility was considered an essential feature of online culture rather than a vulnerability. Viral images and widely shared videos became defining symbols of the social media era. A photograph captured during a protest or a natural disaster could circulate across continents within minutes, informing people who were thousands of kilometers away. Citizen journalism flourished because ordinary individuals could document events without relying on traditional media institutions. The open circulation of media allowed information to move rapidly during moments of crisis or political upheaval. Few observers imagined that the same openness might eventually create structural weaknesses in the digital environment.

Artificial intelligence has changed how that openness is interpreted. Machine learning systems depend heavily on vast quantities of data. In order to learn how human faces look under different lighting conditions or how voices sound across different emotional tones neural networks must analyze enormous collections of examples. The internet inadvertently became one of the largest datasets ever assembled. Photographs posted on social media platforms, video recordings uploaded to sharing sites and voice clips embedded in countless digital archives provided the raw material that researchers used to train generative models. Over time the boundaries between publicly shared media and machine learning datasets began to blur. Images that were originally intended for friends or followers could be incorporated into training data used to develop powerful artificial intelligence systems.

The relationship between open media systems and artificial intelligence therefore became deeply intertwined. The more visual data circulated across digital platforms the easier it became to train models capable of replicating those patterns. Synthetic media technologies draw upon this immense archive of human expression that has accumulated across decades of online activity. Every photograph uploaded to a network potentially contributes to a dataset that helps machines learn how faces appear, how bodies move and how expressions change. This does not mean that individual users consciously participate in the creation of such systems, yet their digital presence forms part of the informational landscape upon which artificial intelligence is built.

Another crucial element of modern platform architecture concerns the algorithms that determine which content people see. Social networks rarely display posts in simple chronological order. Instead they rely on recommendation systems that analyze patterns of engagement across billions of interactions. Algorithms evaluate how long users watch a video, which images receive comments and which posts are shared repeatedly. Content that generates strong reactions tends to be promoted more widely because it keeps people engaged with the platform for longer periods of time. From a business perspective this approach proved extremely effective. Platforms that successfully captured user attention could support large advertising ecosystems built around targeted marketing.

Yet the same algorithmic logic can produce unintended consequences when synthetic media enters the system. A manipulated image that provokes anger or curiosity may travel faster than verified information simply because it attracts attention. Algorithms designed to maximize engagement do not inherently distinguish between authentic documentation and fabricated content. As long as users react strongly the system interprets the response as a signal that the material should be shown to more people. In this way the architecture of attention driven platforms can amplify the reach of manipulated media even when the original creators of the system never intended such outcomes.

The economic model underlying these platforms reinforces the importance of engagement. Advertising remains the primary source of revenue for many digital networks. Advertisers are willing to pay more when they can reach audiences that are precisely defined by interests and behavior patterns. To deliver such targeted advertisements platforms collect detailed information about how individuals interact with content. Every click, pause and scroll becomes part of a behavioral dataset that describes user preferences with increasing precision. This constant monitoring allows algorithms to predict which images or messages will keep a person engaged for longer periods of time.

As a result the architecture of the modern internet performs two functions simultaneously. It distributes media across global networks while also generating data about the individuals who consume that media. Images and videos therefore operate both as communication tools and as sources of behavioral information. When a photograph spreads widely it not only reaches large audiences but also produces signals about how those audiences react. The system learns from these interactions and adjusts future recommendations accordingly. Over time the platform becomes increasingly adept at predicting which content will capture attention.

The intersection of open media circulation, artificial intelligence development and behavioral data collection creates a complex digital environment in which synthetic media can thrive. Deepfake technologies rely on large datasets of images and voices that often originate from the same networks where manipulated content later spreads. Engagement driven algorithms may unintentionally accelerate the circulation of sensational or controversial material. Meanwhile the economic incentives that sustain digital platforms encourage the continuous collection of data about user interactions. Each of these elements evolved for reasons that initially appeared beneficial. Together they form a system that is extraordinarily powerful but also increasingly difficult to govern.

Recognizing these structural dynamics has led researchers and technologists to reconsider some of the assumptions that guided the early design of social media platforms. Openness and frictionless sharing once seemed unquestionably positive because they enabled global communication on an unprecedented scale. Today the same features raise questions about whether individuals retain sufficient control over their own digital identities. When personal images can be copied, analyzed and manipulated by algorithms the architecture of the internet itself becomes part of the debate about digital safety.

The deepfake phenomenon therefore represents more than a technological curiosity. It exposes the intricate relationship between artificial intelligence, data economies and the design of digital platforms. Understanding this relationship is essential for any attempt to address the challenges posed by synthetic media. If the architecture of the internet encourages unrestricted circulation of images and continuous collection of behavioral data then solutions may require more than improved detection algorithms. They may require rethinking how digital systems manage the visibility of personal information and how media moves across networks. This realization is gradually shifting the conversation about the future of the internet. Increasingly the focus is moving away from individual pieces of content toward the structural properties of the platforms that host them. Instead of asking only how to remove harmful material analysts are beginning to ask how digital environments might be designed so that such material becomes harder to produce or distribute in the first place. The answer to that question may determine how societies navigate the challenges of the deepfake era.

The Surveillance Internet and the Data Extraction Economy

As the internet matured from a network of information exchange into a system dominated by large digital platforms, another transformation quietly unfolded beneath the surface. Images, videos and conversations were no longer simply forms of communication. They became sources of behavioral signals. Every interaction with digital content revealed something about the person viewing it. A user who paused on a video for several seconds demonstrated interest. A person who repeatedly watched similar content revealed patterns of preference. Comments, shares and reactions all contributed to a continuous stream of data that described how people responded to the information flowing through online networks. When billions of individuals participate in such interactions every day the resulting datasets become extraordinarily detailed maps of human attention.

The economic model that emerged from this environment reshaped the architecture of the modern internet. Many of the largest technology platforms realized that the true value of their systems did not lie only in hosting communication but in analyzing how users interacted with that communication. By observing behavioral patterns across vast populations algorithms could predict which types of images, videos or messages were most likely to capture attention. These predictions allowed platforms to optimize the order in which content appeared on screens and to refine advertising systems that delivered targeted messages to specific audiences. Over time a new digital economy formed in which attention itself became one of the most valuable resources on the internet.

Within this attention driven economy personal data functions as a crucial raw material. Behavioral tracking technologies monitor how individuals navigate digital spaces, which posts they linger on and which conversations they ignore. The data generated through these interactions is used to construct detailed profiles that describe interests, habits and emotional responses. These profiles make it possible for advertising systems to reach audiences with remarkable precision. A company promoting a new product can target users who have previously shown interest in similar topics. Political campaigns can deliver messages tailored to particular groups of voters. From a commercial perspective the system is highly efficient because it connects advertisers directly with audiences that are statistically likely to respond.

Yet the same system also raises fundamental questions about privacy and autonomy. Many users participate in social platforms believing they are simply sharing experiences with friends or following news from around the world. At the same time their interactions contribute to complex behavioral datasets that may influence how information is presented to them in the future. Recommendation algorithms continuously learn from user activity and adjust the flow of content accordingly. Over time individuals may find themselves navigating information environments that are subtly shaped by predictive models designed to keep them engaged. The more effectively these systems capture attention the more valuable the resulting data becomes.

The relationship between behavioral data and the circulation of digital media becomes particularly important in the context of synthetic content. Platforms that prioritize engagement often amplify material that provokes strong emotional reactions. Sensational images or controversial videos tend to attract more interaction than carefully verified information. Algorithms interpreting these interactions as signals of relevance may promote such material more widely across networks. In environments where deepfake technologies make it possible to generate convincing but fabricated media the potential for manipulation increases significantly. A synthetic video that triggers outrage or curiosity can spread quickly through algorithmic recommendation systems before its authenticity is questioned.

Some researchers describe this phenomenon as a structural feature of the surveillance based internet. The term does not necessarily imply deliberate monitoring in a traditional sense but reflects the continuous observation of user behavior required to sustain the data driven model. Every click, pause and reaction becomes part of a feedback loop that allows platforms to refine their predictive algorithms. The system is capable of learning from billions of interactions and adjusting its behavior accordingly. From an engineering perspective the infrastructure is remarkably sophisticated. From a societal perspective it introduces new questions about how much visibility digital platforms should have into the lives and preferences of their users.

These questions become even more complex when viewed through a global lens. In many parts of the world a small number of technology companies provide the primary infrastructure through which citizens communicate and share information. Social networks, video platforms and messaging services form the backbone of digital interaction for billions of people. While these systems connect societies across continents they also concentrate enormous influence within relatively few organizations. Data generated by users in one region may be stored and processed in another. Algorithms developed in distant technology hubs shape the information environments of entire populations.

This concentration of digital power has led to growing discussions about the concept of data sovereignty. Governments and policy analysts increasingly ask where the information generated by citizens is stored and who ultimately controls it. When behavioral data becomes a central economic asset the location and governance of that data acquire geopolitical significance. Countries with rapidly expanding internet populations may contribute vast quantities of digital information to global platforms while possessing limited influence over how that data is used. In such circumstances the digital economy begins to intersect with broader debates about technological independence and regional development.

The emergence of synthetic media technologies intersects with these issues in subtle but important ways. Deepfake systems depend heavily on the availability of large datasets that describe human faces, voices and expressions. Much of this material originates from the same digital platforms that collect behavioral data about user interactions. The vast visual archives of the internet therefore serve two purposes simultaneously. They support communication among individuals while also providing the informational resources required to train powerful artificial intelligence models. When manipulated media later circulates through the same networks the boundaries between user generated content, machine learning datasets and algorithmically distributed information become increasingly difficult to distinguish.

Understanding this interconnected system is essential for grasping why the deepfake era poses such a significant challenge. Synthetic media does not emerge in isolation. It develops within an ecosystem shaped by open media circulation, engagement driven algorithms and extensive behavioral data collection. Each element reinforces the others. Images shared widely across networks become training material for artificial intelligence. Algorithms designed to maximize engagement accelerate the spread of visually striking content. Behavioral data systems refine predictions about which images will attract attention. Together these mechanisms create a digital environment that is extraordinarily dynamic yet increasingly difficult to regulate.

In response to these developments a growing number of technologists have begun to explore whether the next phase of the internet might require different architectural principles. If the current model depends heavily on unrestricted data collection and open media circulation alternative approaches may seek to reduce those dependencies. Instead of designing platforms around the continuous extraction of behavioral information engineers might experiment with systems that limit how much data is visible to the platform itself. Instead of allowing personal images to circulate freely through public links digital environments could be structured so that media remains under greater control of the individuals who created it.

Such ideas represent an emerging shift in how the future of digital infrastructure is imagined. For many years debates about the internet focused primarily on how platforms should regulate content after it appeared online. The rise of synthetic media and large scale behavioral data economies has encouraged a different perspective. Increasingly the conversation revolves around whether the design of digital systems can prevent certain forms of misuse from occurring in the first place. This architectural approach does not eliminate the need for policy or moderation but suggests that technological design may play an equally important role in shaping safer digital environments. The deepfake era therefore reveals something deeper about the evolution of the internet. Technologies that once appeared to be neutral communication tools now influence how societies understand truth, identity and information. The systems that distribute images and videos across global networks also shape the economic and political dynamics surrounding those media. As artificial intelligence continues to evolve the challenge facing technologists is not merely to detect fabricated content but to reconsider how digital ecosystems function at their most fundamental level.

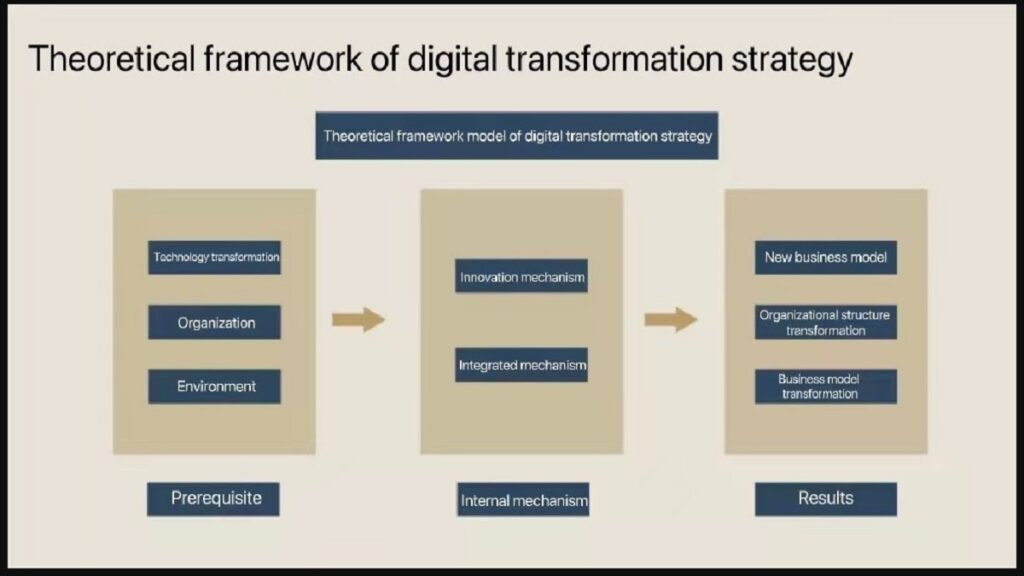

Rethinking the Architecture of Digital Platforms

The growing influence of synthetic media has forced technologists and policymakers to confront a difficult realization. The challenges associated with deepfakes cannot be addressed solely through detection tools or reactive moderation. For many years the dominant strategy of online platforms has been to allow information to circulate freely and then intervene when harmful content appears. Moderation teams review reported material, automated filters attempt to identify problematic posts and regulatory frameworks impose obligations on companies to remove certain types of content. While these measures are important they operate primarily after a problem has already emerged. In a digital environment where millions of images and videos are uploaded every hour and artificial intelligence systems can generate convincing media within seconds this reactive approach increasingly struggles to keep pace with the scale of the challenge.

The reason lies in the structural nature of digital communication itself. Once a piece of media enters the online ecosystem it can travel across networks with extraordinary speed. A single image may be copied, downloaded and redistributed countless times within minutes. Even if the platform where the content first appeared eventually removes it the same material may continue to circulate elsewhere in altered forms. The architecture of the internet was designed to make information move easily and that design has proven extraordinarily successful in connecting societies around the world. Yet the deepfake era demonstrates that the same openness can create vulnerabilities when the authenticity of visual media becomes uncertain.

In response to this reality a growing number of researchers have begun to explore whether digital safety must be addressed at the architectural level rather than only through moderation policies. The idea behind this approach is simple but profound. Instead of building systems that collect vast amounts of data and expose personal media widely across networks developers might design platforms where privacy and data protection are embedded directly into the technological structure. In such environments personal images would not be easily extractable through publicly accessible links. User data would not be continuously analyzed to construct behavioral profiles. Encryption mechanisms could ensure that sensitive information remains protected even within the infrastructure of the platform itself.

This concept is sometimes described as structural privacy or privacy oriented architecture. The distinction between this approach and earlier models is significant. Traditional platforms often treat privacy as a set of optional settings that users can configure after joining a service. Structural privacy reverses that logic by making protection the default condition of the system. The design of the platform itself limits how much information can be collected or exposed. In practical terms this means that the possibility of misuse is reduced not by relying entirely on rules and enforcement but by restricting the technical pathways through which such misuse can occur.

Such architectural thinking also intersects with broader debates about the role of behavioral data in the digital economy. The attention driven model that dominates many social platforms relies heavily on the continuous observation of user interactions. Algorithms analyze these interactions to determine which content should appear in a person’s feed and which advertisements should accompany it. While this system has supported the rapid growth of online services it also encourages extensive data collection and detailed profiling of individual behavior. As societies become more aware of how such profiling operates some technologists have begun to question whether alternative models could sustain digital ecosystems without depending on large scale surveillance of user activity.

The deepfake era adds urgency to this discussion because the circulation of manipulated media interacts directly with the mechanisms of attention driven platforms. Algorithms that reward engagement may unintentionally amplify synthetic content that provokes strong emotional reactions. If digital environments are redesigned to reduce unnecessary exposure of personal media and limit behavioral tracking the incentives that drive such amplification could also change. In this sense the challenge of synthetic media becomes intertwined with the broader evolution of digital platform architecture.

Another dimension of this debate concerns the global distribution of digital infrastructure. Over the past two decades a relatively small number of technology companies have come to dominate the platforms through which billions of people communicate online. These systems connect societies across continents yet they also centralize vast quantities of data within corporate ecosystems. As artificial intelligence technologies continue to develop the value of such data grows even further. Governments and researchers in several regions have therefore begun to examine how digital infrastructure might evolve in ways that preserve both connectivity and local autonomy over data generated by citizens.

Within this context discussions about new architectural models extend beyond technical considerations. They also reflect questions about trust, governance and the future of digital communication. If individuals feel that the systems hosting their images and conversations expose them to manipulation or misuse they may become increasingly cautious about participating in online environments. Rebuilding confidence in digital platforms therefore requires demonstrating that technology can protect personal dignity as well as enable global connectivity.

The search for such balance is likely to shape the next stage of the internet’s evolution. Artificial intelligence will continue to expand the capabilities of digital media creation. Synthetic images, voices and video sequences will become more sophisticated and more accessible. At the same time societies will demand stronger assurances that personal data and visual identity cannot be exploited without consent. The tension between these forces will influence how engineers design the platforms that define the digital experience of future generations.

For young people who have grown up in a world where identity is expressed largely through digital media the outcome of this transformation carries particular significance. Photographs and videos are central to how friendships are maintained, how communities organize and how individuals present themselves to the world. If these forms of expression become vulnerable to manipulation the consequences extend far beyond technical inconvenience. They affect personal dignity, social trust and the willingness of individuals to share their lives online. Ensuring that digital environments remain safe spaces for such expression is therefore not merely a technical challenge but a societal responsibility.

The deepfake era has thus revealed something fundamental about the internet itself. The technologies that once appeared to be neutral tools for communication have become powerful systems capable of shaping how information is created, distributed and believed. When those systems encounter new forms of artificial intelligence their strengths and weaknesses become visible at a global scale. Addressing the challenges posed by synthetic media will require not only new detection technologies but also a deeper reconsideration of how digital platforms are built. The future of the internet may ultimately depend on whether engineers, policymakers and societies are willing to rethink the architectural assumptions that guided its early expansion. The networks that connected the world over the past two decades were designed primarily to maximize openness and speed. The next generation of digital infrastructure may need to place equal emphasis on privacy, resilience and responsible stewardship of personal data. If such balance can be achieved the internet may continue to function as one of the most transformative communication systems in human history while preserving the trust upon which meaningful communication depends.